Most years, the CDSC is lucky enough to recruit some amazing new Ph.D. students to the lab. This fall is no exception and we are thrilled to welcome an extraordinary group across several of our group’s campuses. The students join us from a wide variety of places, backgrounds, and prior affiliations (which should be encouraging for any prospective students looking to join the group in the future!). Some short bios and photos follow below (in alphabetical order by last name) with the text largely taken from the people page on our wiki. You can look forward to reading more about their research in the coming years!

Eric Fassbender is a first year PhD student in the Media Technology and Society program at Northwestern University. He is interested in studying technology adoption as an expression of resistance and protest. He is currently researching the ways that people form decisions to leave online groups around issues of surveillance and political alignment. You can learn more about him on his website here or on mastodon here. Outside of work, Eric loves reading sci-fi, all things cyberpunk, and hiking to improve his landscape photography.

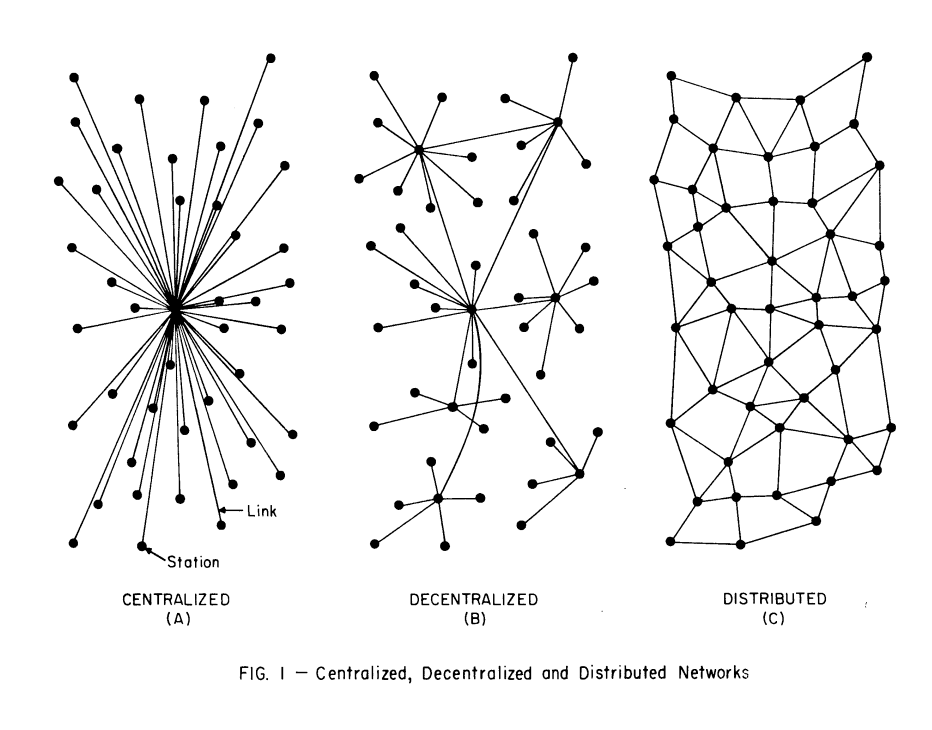

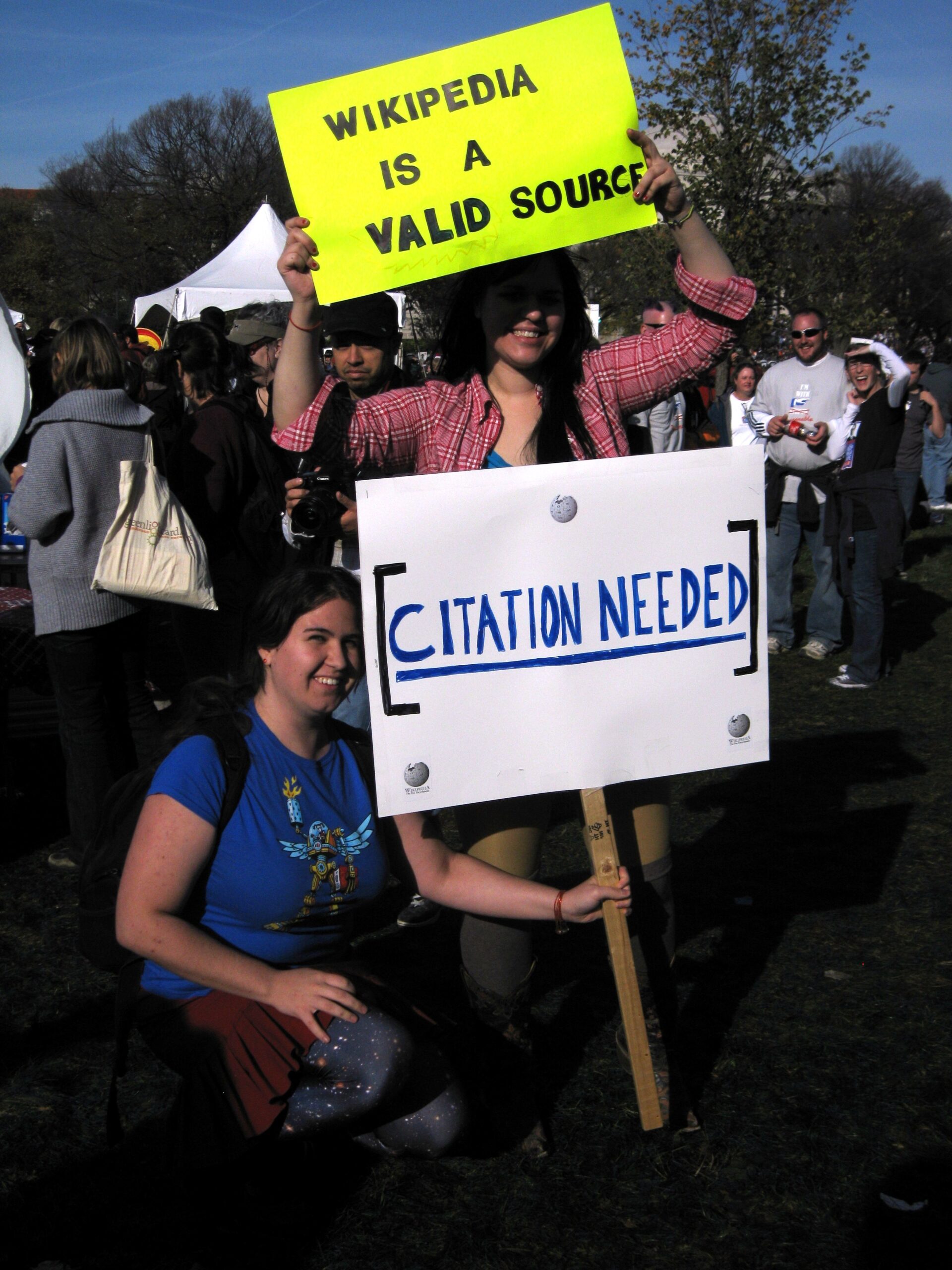

Jonghyun Jee (pronounced Jong-H-yuh-n, not Hyoon) is a first-year PhD student in the Media, Technology, and Society program at Northwestern University. He studies how online communities create and enforce their rules. His research has looked at a range of platforms, from established ones like Wikipedia, YouTube, and Discord to decentralized networks such as Bluesky and Mastodon. Lately, Jonghyun has been exploring how to use LLMs to simulate these social environments at scale. He’s driven by the belief that critiques of technology (even dystopian ones) are less calls for its undoing than invitations to reimagine it. When Jonghyun procrastinates, he practices zen meditation and writes short film synopses.

Manish Kumar is a first-year PhD student at the School of Information at the University of Texas at Austin advised by Dr Nathan TeBlunthuis and Dr Edgar Gómez Cruz. Manish’s work explores political expression on social media and how it connects to people’s offline relationships. He’s fascinated by human experiences at scale, and tries to bridge qualitative inquiry with computational techniques to capture that complexity. Broadly, Manish studies how social media/technology becomes woven into people’s everyday political sensemaking. Manish grew up in Patna, India. He earned a degree in Information Science & Engineering and spent a few years as a software developer, but has always been drawn to the sociological side of technology. That curiosity took Manish to UC Berkeley for a Master’s of Information Management and Systems, where he discovered research and got completely hooked. In his free time, Manish like to do nerdy stuff like reading historical fiction, going on walking tours, learning about local history (that includes the petty neighbourhood rivalries) and going to museums.

Jianghui Li is a first-year PhD student at the University of Texas at Austin’s School of Information, advised by Dr. Nathan TeBlunthuis. He is interested in researching belief dynamics, collective behavior, and sustainability in sociotechnical systems through the lens of complex adaptive systems. Before studying at UT Austin, Jianghui earned bachelor’s and master’s degrees at Syracuse University’s School of Information Studies, and he misses the cool Syracuse weather Outside of research, Jianghui enjoys fishing, learning about fish, and sometimes thinking about the similarities between ecological systems and human networks.

Dylan Smith is a first-year MA/PhD student at University of Washington—Seattle in Communication. They grew up in Portland, Oregon and got a bachelor’s degree in Computer Science at Carleton College in Minnesota. Dylan’s research interests are in online interpersonal communication and online governance. For the past few years, Dylan has been working on a research project studying Wikipedia’s arbitration process. In their free time, Dylan likes reading fiction, spending time with friends, hiking, and long-distance running. Last Spring, Dylan ran their first marathon!!!

Ran Tang is a MA/PhD student in the Department of Communication at the University of Washington. Her research focuses on the moderation of online communities. She primarily use qualitative methods to study the daily work of volunteer moderators, and is also exploring the use of quantitative approaches in future projects. In her free time, Ran enjoys playing table tennis and swimming.

Yiwei Wu is a first-year PhD student at UT Austin. Previously, she attended the University of Washington for her bachelor’s degree. Her research interests include online collective action, peer production, and community data governance. In her free time, Yiwei enjoys baking, playing musical instruments (bass and Chinese flute), and playing farming games (e.g., Stardew Valley).